Q1:

I would like to ask for advice on your recently published STRESS tool. I would like to use it to identify residues that might be involved in allostery, however in the case of surface critical residues, it currently reports only up to ten residues per binding pocket. Is there a way to "hack" the identify_high_confidence_BL_sites.py script (which writes this file) to write all such residues, according to some probability cutoff? (I’m a Perl programmer, with relatively limited python experience.) Thank you in advance.

A1:

I have modified 2 of the c scripts, so you might want to re-compile using these new c scripts before running your calculation.

Now — how exactly did I modify the scripts, and how can you expect your new output to be different from what you were getting previously? I was a little bit unsure about your query, where you wrote "to write all such residues, according to some probability cutoff". As you know, there was an original cutoff of 10 residues. I have modified the scripts such that the new limit is now set to 15 residues. You can change this cutoff number to be any arbitrary value that you wish, simply be changing the c scripts in the way that I’ve changed them, and then recompiling. To see the specific changes that I’ve made, you can run the Unix "diff" command to compare the old C scripts with the new ones. Doing so, you’ll see that:

In the script bindingSiteMeasures.c, I have replaced instances of "10" with "15" in lines 77, 79, 217, 219, 240, 242.

In the script surfaceProbe.c, I have replaced instances of "10" with "15" in lines 1227, 1229, 1235, 1237.

You could try running things using these 2 new scripts, and you can let me know how things go.

Q2:

Basically I would like to use the tool to identify putative allosteric residues in a protein, i.e. get a list of them.Theoretically, one should be able to set some kind of probability cutoff, which holds for most residues. i.e. that there is a certain probability that they are allosteric. After reading the paper (and I cannot say I understand everything in the methodology) it seems that there are two problems: first that the surface and interior residues are identified by different methods (so the likelihood of being allosteric may be different for the two sets); second that in the case of the surface sites there is a limit of 10. So I wonder whether it is somehow possible to adjust/set the internal cutoffs of the tool (Jaccard?) to get a – ideally full – list of surface and internal residues that are more or less equally likely to be allosteric. Do you think it is doable? And if not with this tool, can you suggest one?

A2:

I think I understand what you mean. The 2-fold problem here is:

(1) since the surface and interior allosteric residues are identified by very different methods, there is a sort of "apples and oranges comparison" that makes it difficult to assign a unified, consistent probability rule (of being allosteric) to both sets of residues

(2) for the case of surface residues, there is an (admittedly) arbitrary cutoff threshold of 10 residues per site.

With respect to problem (1): I agree that the two methods are very different, but the idea assigning a numeric likelihood or probability (of being allosteric) in either case is actually not really that straightforward. In both cases, these are only predictions, and we have tried to make these predictions as accurate as possible by comparing our predicted allosteric residues with known allosteric sites in proteins. The two methods are very different because the allosteric mechanisms in the interior and on the surface are so distinctly different. If you are working with just one protein, and you need greater sensitivity, I might suggest using molecular dynamics, which would give more accurate predictions. Relevant studies that use such approaches might include:

del Sol, A., Fujihashi, H., Amoros, D., and Nussinov, R. (2006). Residues crucial for maintaining short paths in network communication mediate signaling in proteins. Mol. Syst. Biol. 2(1).

Ghosh, A., and Vishveshwara, S. (2008). Variations in Clique and Community Patterns in Protein Structures during Allosteric Communication: Investigation of Dynamically Equilibrated Structures of Methionyl tRNA Synthetase Complexes. Biochemistry. 47, 11398-11407.

Ming, Dengming, and Michael E. Wall. “Quantifying allosteric effects in proteins.” Proteins: Structure, Function, and Bioinformatics 59.4 (2005): 697-707.

Mitternacht, S. and Berezovsky, I.N. (2011). Binding leverage as a molecular basis for allosteric regulation. PLoS Comput. Biol. 7, e1002148.

Rousseau, F. and Schymkowitz, J. (2005). A systems biology perspective on protein structural dynamics and signal transduction. Curr. Opin. Struct. Biol. 15, 23–30.

With respect to problem (2): We have established the parameters of the surface-site identification scheme using a known set of allosteric residues. That is, our parameters were established empirically to best capture known allosteric sites. The details of all this can be found in the Supplementary Materials of the paper, specifically in the Supp section 3.1-a-iii "Defining & Applying Thresholds to Select High-Confidence Surface-Critical Sites". I would be happy to help you modify the code to use different parameters, but I would advise against changing them, since again, they were empirically optimized.

Unfortunately, I do not know of any one tool or software that would provide both surface and allosteric residues within one suite, and we have tried as best as possible to do essentially that.

Q3:

Thank you very much. I plan to analyze a relatively large number of proteins, so MD doesn’t seem to be the right choice. I thought that STRESS was optimized empirically, so I would prefer to change it a little as possible. I made a few tests, and I would like to ask for some more help in interpreting the results. 1) is STRESS suitable for the analysis of protein complexes? 2) Some proteins have very large ligands, while STRESS seems to use a small ligand to identify the cavities. I’m not familar with the internals, but that may matter a lot for the results in proteins where a ligand is large, i.e. a dinucleotide, or a cofator. Is it possible to somehow adjust this for a given protein structure in the analysis?

Finally, it is not entirely clear how to use the firs two columns of the table of surface critical residues, i.e. how to identify the rows that actually matter, and how to set a threshold of reported residues so that it contains all residues of a site not just 10.

A3:

There are a few questions that you pose here, so I’ll address each one in turn:

With respect to: "I plan to analyze a relatively large number of proteins, so MD doesn’t seem to be the right choice."

–> In that case, I agree that STRESS is a good option.

With respect to: "1) is STRESS suitable for the analysis of protein complexes?"

–> The answer here really depends on the nature of the complex and the nature of the allosteric residues that you’re trying to identify. If you’re considering an obligate protein complex (ie, a complex in which the proteins must be together in order to function, such as a STAT dimer), then STRESS is a great tool. In such a case, STRESS will attempt to identify both internal and surface allosteric residues in the context of such an obligate complex. In fact, STRESS was parameterized in the context of proteins, some of which were studied in complex form (in the PDB, we studied the so-called "biological assemblies"). In addition, we studied conservation (as a type of validation) using many proteins in complex form.

However, you must be very careful — it may not be ideal to use STRESS to study transient complexes (for example, a protein kinase interacting with its target during target phosphorylation). In such a case, consider the surfaces of normally-exposed proteins — these surfaces may have biologically functional allosteric sites, but those surfaces will be occluded when the proteins are in complex form. As a result of that surface occlusion, STRESS will not have access to those surfaces in the surface-critical identification module. Also be aware that the network of interconnecting residues will have very different topological properties when the proteins are in their complex vs. monomeric forms (for instance, a given residue that shows up as a hub in the network of the complex may not be a hub in the monomeric network). Thus, again, in such a transient complex, it may not be appropriate to use STRESS to identify interior allosteric residues (unless you’re only interested in the interior allosteric residues that function when the protein is in complex form).

Having said all this, I should mention that most protein complexes that occur in the PDB are less likely to be transient (transient interactions are difficult to crystalize), so, in that regard, I’d say that STRESS is probably ok for most complexes in the PDB.

With respect to: "2) Some proteins have very large ligands, while STRESS seems to use a small ligand to identify the cavities. I’m not familar with the internals, but that may matter a lot for the results in proteins where a ligand is large, i.e. a dinucleotide, or a cofator. Is it possible to somehow adjust this for a given protein structure in the analysis?"

–> Unfortunately, it is not possible to change the 4-atom ligand. You’re absolutely correct about this, and it is indeed a consideration that we took into account. However, the 4-atom ligand ism an inherent limitation in the STRESS software. For 3 reasons, it we decided to stick to just 4 residues, and it is very difficult to change that:

1) The software performs MC and needs to measure atom-ligand distances many times. Increasing the number of atoms in the ligands substantially increases the running time of the software. Increasing to just 5 atoms may increase the running time by more than 10-fold.

2) We wanted to make STRESS as general as possible, and STRESS does not assume a-priori knowledge of the specific ligands of a given protein. Thus, to provide such generality, we decided to use a 4-atom ligand (as many natural ligands may be pretty small).

3) The STRESS software was actually developed based on a code precursor written by one of the other authors a few years ago. That author hard-coded the 4-atom ligand requirement into the software’s architecture, and it was very difficult to change that setup.

With respect to: "it is not entirely clear how to use the firs two columns of the table of surface critical residues, i.e. how to identify the rows that actually matter…"

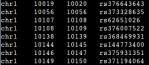

–> The first column (integers) is actually meaningless to the user — it only serves as an arbitrary index for the site when the software is run, and it was used by us as an internal tracker (index) for debugging purposes. The second column (floating-point numbers) indicates the actual binding leverage score for a site. High scores designate high binding leverage scores (ie, sites that strongly couple to the protein’s motions). Columns 3 and over designate the actual identities of the residues within that site.

With respect to: "how to set a threshold of reported residues so that it contains all residues of a site not just 10"

–> This can be done by changing the code in the way that I had detailed for those 2 other scripts (ie, where I changed the threshold from 10 residues to 15 residues). I would be happy to help you change it to another threshold if you like, but everything should work if you change the code based on my earlier changes.

Q4:

Thanks a lot. So, if I understand correctly, STRESS can handle pdb entries with multiple chains (i.e. "motions" can be transmitted between residues of different chains, and having more than one chain in an entry does not compromises its performance) – and it is up to the user to decide whether it biologically makes sense or not – distinguishing between obligate and transient complexes is far from being straightforward. Ligand size might be a bigger problem for me.

Is there a recommended cutoff for binding leverage score (i.e a score below the likelihood of being allosteric is negligible)? Also it would be great if one could set the number of printed residues (in the surface critical file) not by their maximum allowed number (10 or 15), but by some statistical measure, that quantifies their likelihood of being allosteric. (In my tests for several sites the number of printed residues is lower than 10, I guess in those cases a cutoff like this is used.)

A4:

A few items here, so I’ll address each in turn:

With respect to: "I understand correctly, STRESS can handle pdb entries with multiple chains (i.e. "motions" can be transmitted between residues of different chains, and having more than one chain in an entry does not compromises its performance) – and it is up to the user to decide whether it biologically makes sense or not"

—> Yes — your interpretation is 100% correct.

With respect to: "distinguishing between obligate and transient complexes is far from being straightforward"

—> Although it is true that it is not straightforward, I have found that it is quite reasonable to treat most proteins as stable complexes if they’ve been deposited in the PDB, for 2 reasons: 1) If they were truly very transient in nature, it would be difficult to crystallize them, and 2) if you’re studying a protein in the complex form, then it is often the case that the protein is in its biologically active state. In such a cases, identifying allosteric residues in this state is the most reasonable way to go (ie, the allosteric residues within the biological state are, of course, generally what’s of interest).

With respect to: "Ligand size might be a bigger problem for me."

—> Correct — ligand size will be a problem if most of your ligands are quite large. However, STRESS is also designed to not only find the known ligand-binding sites, but also "cryptic allosteric sites" – that is, sites that do not serve as allosteric residues in normal biological context, but which may function allosterically in artificial contexts (for instance – many drug binding sites on proteins do not serve as true biological binding sites within the normal functioning of a cell, but drug binding to the site may nevertheless impart allosteric consequences).

With respect to: "Is there a recommended cutoff for binding leverage score (i.e a score below the likelihood of being allosteric is negligible)?"

—> There really is not cutoff. The reason is that different proteins (being of very different sizes and topologies) will exhibit such different distributions of binding leverage scores. Using a universal cutoff would be unrealistic, given the vastly different score distributions for different proteins. Thus, rather than using a ‘universal cutoff’, we instead devised a scheme (detailed in the supplement) to find a reasonable cutoff using the distribution of binding leverage scores for each protein.

With respect to: "Also it would be great if one could set the number of printed residues (in the surface critical file) not by their maximum allowed number (10 or 15), but by some statistical measure, that quantifies their likelihood of being allosteric. (In my tests for several sites the number of printed residues is lower than 10, I guess in those cases a cutoff like this is used.)"

—> I do see what you mean, but this would really not be straightforward. In order to do this, one would need to do the following two things (the first of which would be very difficult to do theoretically, and the second of which would entail a lot of extra work):

1) One would need to devise a statistically rigorous means of assigning confidence in the first place. Doing this may entail assumptions about what the distributions (be they distributions of scores or measures of confidence, etc) would be. For instance, one may need to assume that binding leverage scores are normally distributed. However, we’ve observed that normal distributions are generally not applicable. What’s more difficult is trying to justify that one single family of distributions (ex: exponential) describes the distributions of scores for all proteins universally, and this really is not the case. Thus, it would be theoretically quite difficult to devise a truly justifiable and rigorous statistical test.

2) Just from a technical point of view — we iteratively merging the sites using jaccard scores, etc (details in the Supplement). Re-engineering the pipeline that we already have (assuming this could be justified — see note #1 above) would entail a lot of work. Plus, I wouldn’t recommend putting the code under such surgery, especially if you have limited experience with C.

In any case, I think that’s all for now. Certainly feel free to let me know if there’s anything else with which I can help.